Knowledge, Attitudes, and Practices Related to Artificial Intelligence Among Medical Students and Academics in Saudi Arabia: A Systematic Review

Abstract

This systematic review aims to analyze the existing literature on artificial intelligence (AI) applications in medical education in Saudi Arabia, and it spanned the period from January 2020 to February 2025. The review focuses on the nature and scope of AI applications, evidence synthesis types, geographical distribution of authorship, quality of research, challenges encountered, and research gaps within Saudi Arabia. Studies were retrieved from the PubMed, Google Scholar, ProQuest, and Web of Science databases. The process followed the guidelines outlined by the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA). We included studies that explored knowledge, attitudes, and practices of AI among medical students and academics in Saudi Arabia. We first screened the titles and abstracts of the studies according to our inclusion criteria, and then reviewed the full texts of those that met the criteria. A standardized form was used to collect data, including author information, study population, research objectives, and key findings.

The review identified key areas of focus, including personalized learning, interactive simulations, and real-time feedback in medical education. Most studies discussed the potential benefits of AI tools in improving student engagement and clinical decision-making skills. However, significant challenges were reported, such as insufficiencies in faculty training, data privacy concerns, and disparities in technological infrastructure. While the use of AI in medical education in Saudi Arabia has great potential, there are still significant challenges. There is a need for proper training for faculty and standardized AI curricula. More research is required to assess the long-term effects of AI on educational outcomes and find ways to overcome the current barriers to its successful implementation.

Article type: Review Article

Keywords: artificial intelligence, educational technology, faculty training, medical education, saudi arabia

License: Copyright © 2025, Alsahafi et al. CC BY 4.0 This is an open access article distributed under the terms of the Creative Commons Attribution License CC-BY 4.0., which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited.

Article links: DOI: 10.7759/cureus.83437 | PubMed: 40462802 | PMC: PMC12130740

Relevance: Moderate: mentioned 3+ times in text

Full text: PDF (584 KB)

Introduction and background

The concept of artificial intelligence (AI) was initially described in 1956 by McCarthy, and Turing later expanded on the idea, defining AI as the presence of intelligent reasoning that could be integrated into machines [ref. 1,ref. 2,ref. 3]. Along with the advancements in AI proficiencies, the definition of AI has been continuously evolving [ref. 3]. With the advent of recent AI tools and technologies, including generative pre-trained transformers, natural language processing (NLP), expert systems, machine learning, intelligent agents, personalized learning, and virtual learning environments, AI has emerged to represent the capability of a digital machine to perform tasks that are typically associated with intelligent beings. These tasks include planning, diagnosing disease, summarizing, self-correcting, decision-making, creativity, and improving learning, teaching, assessment, and educational administration [ref. 3,ref. 4,ref. 5,ref. 6,ref. 7].

Integrating AI into the education system takes considerable effort and guidance. Its importance in education has been highlighted through various initiatives and reports at both the international and national levels. In the United States, for instance, organizations developing AI-based personalized learning platforms have received significant funding to improve student academic performance and reduce educational disparities for disadvantaged individuals [ref. 6,ref. 8,ref. 9]. Similarly, China has launched a strategic plan to modernize education by promoting the use of intelligent technology in classrooms and expanding professional development opportunities for educators in AI-related fields [ref. 6,ref. 10,ref. 11,ref. 12]. Building on these international developments, Saudi Arabia has also embraced AI integration, particularly through Vision 2030 and the National Transformation Program. These efforts are expected to enhance AI technology and innovation in education [ref. 3,ref. 13]. To make the most of these government efforts, the Organization for Economic Co-operation and Development (OECD) has recommended that educational researchers engage in applied research to advance educational practices [ref. 14].

Given AI’s potential in promoting education, the technology has become a key focus for educational researchers, policymakers, and health practitioners. However, when comparing the use of AI in education to other educational technologies like gamification and blended learning, research in AI tends to be fragmented and less structured. Therefore, further research is required to understand whether and how these emerging technologies and applications benefit education [ref. 6,ref. 15].

The unfamiliarity with AI technologies, along with the need for governance and regulations concerning privacy, ethical, legal issues, equity, security, and readiness, poses a challenge in effectively introducing and integrating AI tools into schools and universities [ref. 2,ref. 16–ref. 18]. Gordon et al. reviewed 278 publications covering various applications of AI in medical education, including admissions, teaching, assessment, and clinical reasoning, and found that most papers focused on the early adaptation phases of AI in education, with only a few reporting its role in driving long-term educational changes [ref. 19]. A scoping review provided a thematic analysis of 22 publications on the employment of AI in undergraduate medical education. The findings revealed a significant heterogeneity and poor consensus across studies, with no standardized framework for integrating AI into the undergraduate medical curriculum [ref. 20]. Preiksaitis and Rose (2023) reviewed 41 publications regarding generative AI in medical education and found a variety of applications, including self-directed teaching, simulation, and writing support. However, their review also highlighted significant concerns, such as academic integrity, the accuracy of information, and the potential negative impact of generative AI on learning outcomes [ref. 21].

Numerous systematic reviews have also analyzed papers on the use of AI in medical education, focusing on the trends in studies such as subject areas, geographical distribution, and textual patterns [ref. 22]. Others have investigated specific disciplines like languages, mathematics, and medicine [ref. 23], individual educational activities such as assessment [ref. 24], and particular applications including assistive robots, adaptive learning, or proctoring systems [ref. 25].

Literature suggests that the existing knowledge of AI tools in the educational system across the Gulf Cooperation Council countries, including Saudi Arabia, remains fragmented and incomplete [ref. 13,ref. 26], despite substantial investments and efforts to advance technology and innovation in the field [ref. 13]. It has been reported that AI assists the development of interactive simulations, virtual patients, and real-time feedback, which aid medical students in refining their clinical decision-making skills. Moreover, it increases accessibility to medical education, especially in remote areas, thus expanding opportunities for quality education nationwide.

Despite its benefits, the adoption of AI in medical education within Saudi Arabia encounters certain challenges, including inadequate faculty training in AI tools, concerns about data confidentiality, and the absence of structured AI education in medical curricula. Moreover, over-reliance on AI might reduce human interaction and critical thinking skills. Differences in technological infrastructure across institutions may also limit the widespread implementation of AI-based education [ref. 27–ref. 35]. However, these studies have focused on the cognitive aspects and perceptions of medical students regarding the use of AI in their teaching and learning. Therefore, a more comprehensive approach is required to fully examine the role of AI in the educational system in Saudi Arabia.

To our knowledge, the impact of AI tools on medical education within Saudi Arabia has not been systematically evaluated. Therefore, this systematic review aims to analyze the existing literature on AI applications in medical education in Saudi Arabia, during the period from January 2020 to February 2025. This review highlights the nature and scope of AI applications, types of evidence syntheses, geographical distribution of authorship, quality, challenges encountered, and existing research gaps. The research questions were developed through a step-by-step process. We began by searching the existing literature to identify key themes and gaps. Based on that, we drafted an initial list of possible questions. After some discussion and refinement, we settled on the final set to ensure they matched the aims of our review. These questions were chosen as they best reflected the main areas we wanted to explore regarding AI in medical education within Saudi Arabia.

Research questions

RQ1: What is the focus and extent of research on AI tools in medical education within Saudi Arabia?

RQ2: What types of evidence syntheses have been conducted in the context of AI integration into medical education in Saudi Arabia?

RQ3: What is the geographical distribution of AI-related research in medical education within Saudi Arabia?

RQ4: What is the quality of research on AI tools in medical education within Saudi Arabia?

RQ5: What are the concerns expressed by students and academics in Saudi Arabia regarding the implementation of AI tools in medical education?

RQ6: What are the current research gaps in the integration of AI within medical education?

Review

Methods

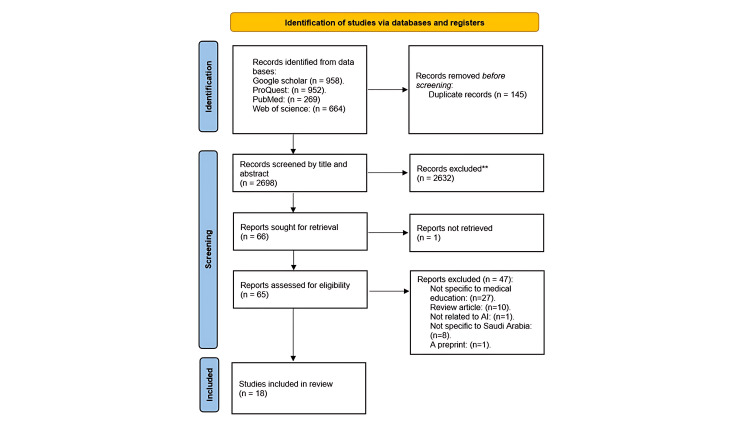

This systematic review was based on the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) 2020 guidelines, as illustrated in Figure 1 [ref. 36]. This study was approved by the King Abdullah Medical Research Center (KAIMRC) Institutional Review Board in Jeddah, Saudi Arabia (study number: NRJ25/068/3).

Study Design and Search Strategy

A comprehensive systematic review was conducted from February 2025 to March 2025, based on a reproducible search strategy. Primary research electronic databases, including PubMed, ProQuest, and Web of Science, were systematically searched. The detailed search strategy is outlined in Table 1. In addition, authors performed a search through Google Scholar using the terms “artificial intelligence” AND “medical education” AND “Saudi Arabia”.

Table 1: Review search string

| Search string | |

| Artificial intelligence | “Artificial intelligence” OR “machine intelligence” OR “AI” OR “ChatGPT” OR “machine learning” |

| AND | |

| Medical education | "Medical education" OR "health professions education" OR "clinical education" OR "medical curriculum" OR “undergraduate medical students" OR "medical residents" OR "healthcare professionals" OR "medical faculty" |

| AND | |

| Saudi Arabia | "Saudi Arabia" OR "Kingdom of Saudi Arabia" OR "KSA" OR "Saudi" |

Eligibility Criteria

This review analyzed only formally published primary research studies that investigated the attitudes, knowledge, and practices of medical students and academics regarding AI tools in medical education within Saudi Arabia. The screening process was conducted by the authors, who independently reviewed titles, abstracts, and full texts. The inclusion and exclusion criteria are detailed in Table 2. Only those research articles available as full text online were included. To ensure thorough coverage, we also reviewed the reference lists of the included papers and retrieved any additional articles that our search might have missed.

Table 2: AI: artificial intelligence

| Inclusion criteria | Exclusion criteria |

| Articles addressed the study objectives | Articles focusing on AI applications in diagnostics, professional training, or clinical practice |

| Articles written in the English language | Articles that include Saudi Arabia with other countries |

| Articles indexed in PubMed, ProQuest, or Web of Science | Articles tested an AI model for clinical purposes |

| Peer-reviewed original research articles | Articles where the population consists solely of healthcare professionals or clinicians |

| Articles focusing on AI applications in medical education within Saudi Arabia | |

| Articles published in the period between January 2020 and February 2025 |

Data Extraction

Duplicate items were removed, and all documents gathered from the systematic search were compiled. The articles were then retrieved, and an initial screening of their titles and abstracts was conducted. Only those articles that met the inclusion criteria underwent a full-text review. The data extracted for this review include publication and authorship details (e.g., journal Q-rank, publication name, number of authors, and author affiliations), study objectives, characteristics of the study population and subjects, study design, and key findings.

Quality Assessment

To evaluate the quality of the studies analyzed, we used the Joanna Briggs Institute (JBI) critical appraisal checklist for cross-sectional and qualitative studies, as shown in Table 3. This tool assesses the methodological rigor of each study and helps identify potential biases in their design, execution, and analysis [ref. 37,ref. 38].

Table 3: JBI: Joanna Briggs Institute

| Cross-sectional studies | Qualitative studies |

| 1 – Were the study objectives clearly stated? 2 – Were participants and settings appropriately selected? 3 – Was the sample size justified? 4 – Were exposure (independent variables) and outcome (dependent variables) measured reliably? 5 – Were confounding factors identified and appropriately controlled? 6 – Were valid and reliable measurement tools used? 7 – Was data analysis conducted with appropriate statistical methods? 8 – Were the results clearly presented? | 1 – Is there congruity between the research methodology and the research question? 2 – Is the philosophical perspective stated and justified? 3 – Is the study design appropriate for the research question? 4 – Are the recruitment methods clearly described and appropriate? 5 – Is data collection clearly explained and justified? 6 – Is the data analysis process rigorous and aligned with qualitative methodologies? 7 – Are the findings supported by direct quotes or evidence from participants? 8 – Has the researcher considered their influence on the study? 9 – Are ethical considerations, including approval and informed consent, addressed? 10 – Do the conclusions align with the research findings? |

We categorized the articles into two groups based on their methodology: cross-sectional studies or qualitative studies. Each study was then assessed using JBI’s 10-question checklist for cross-sectional studies or its eight-question checklist for qualitative studies. The responses were classified into four categories: yes, no, unclear, or not applicable. A "yes" response was given a score of 1, while "no," "unclear," and "not applicable" responses were scored as 0. Therefore, cross-sectional studies could achieve a maximum quality score of 10, while qualitative studies could achieve a maximum score of 8.

Data Synthesis

The analyzed studies employed a wide range of self-developed data collection tools, resulting in data that were too varied and inconsistent to be statistically combined for a quantitative analysis. As a result, performing a meta-analysis was not possible. Instead, we provide a thematic summary of the key findings across all studies. This allowed us to identify and highlight the main patterns and insights. Where applicable, the results are reported as mean ± standard error.

Results

Study Selection

As illustrated in Figure 1, 2,843 articles were identified across all databases. Initially, 145 duplicate records were removed. Then, following an initial screening of titles and abstracts, 2,632 articles were excluded. Subsequently, full-text articles were retrieved, with one being excluded due to unavailability. The remaining 67 articles underwent full-text screening. Based on this, 18 articles met the inclusion criteria and were included for full-text analysis.

Quality Assessment

To assess the quality of the analyzed studies, the journal Quartile (Q) ranking of each article was first assessed (Table 4). Only three articles were published in Q1 journals [ref. 33,ref. 34,ref. 39], while most studies were published in Q3 or Q4 journals.

Table 4: Summary of Q-rank of the studies analyzed

| Article | Journal name | Q-rank |

| Alharbi et al., 2024 [ref. 39] | Scientific Reports | Q1 |

| Almarzouki et al., 2025 [ref. 33] | BMC Medical Education | Q1 |

| Elhassan et al., 2025 [ref. 34] | JMIR Medical Education | Q1 |

| Al Shahrani et al., 2024 [ref. 30] | Healthcare | Q2 |

| Alwadaani et al., 2024 [ref. 31] | Journal of Multidisciplinary Healthcare | Q2 |

| Bin Dahmash et al., 2020 [ref. 27] | The British Journal of Radiology | Q2 |

| Syed and Alrawi, 2023 [ref. 40] | Medicina | Q2 |

| Syed et al., 2024 [ref. 41] | Science Monitor: International Medical Journal of Experimental and Clinical Research | Q2 |

| Alrashed et al., 2024 [ref. 42] | Advances in Medical Education and Practice | Q2 |

| Alghamdi and Alashban, 2024 [ref. 28] | Journal of Radiation Research and Applied Sciences | Q2 |

| Salih, 2024 [ref. 43] | Cureus | Q3 |

| Abdelnasser et al., 2025 [ref. 32] | Annals of Forest Research | Q3 |

| Alqarni et al. 2024 [ref. 29] | Forum for Linguistic Studies | Q3 |

| Gowdar et al., 2024 [ref. 44] | Journal of Pharmacy and Bioallied Sciences | Q4 |

| Alshanberi et al., 2024 [ref. 45] | Journal of Pharmacy and Bioallied Sciences | Q4 |

| Fadil and Alahmadi, 2024 [ref. 46] | Tropical Journal of Pharmaceutical Research | Q4 |

| Alwadaani, 2024 [ref. 47] | Majmaah Journal of Health Sciences | Q4 |

| ALruwail et al., 2025 [ref. 35] | Forum for Linguistic Studies | Q4 |

The next step in the quality assessment involved evaluating each study using the JBI’s assessment checklist. The appropriate version of the JBI checklist was applied based on the study design. The average quality scores were 5 ± 0.43 (n=15) for cross-sectional studies and 5.3 ± 1.20 (n=3) for qualitative studies (Tables 5, 6).

Table 5: JBI: Joanna Briggs Institute

| Source | Questions | ||||||||

| Q1 | Q2 | Q3 | Q4 | Q5 | Q6 | Q7 | Q8 | Score | |

| Alharbi et al., 2024 [ref. 39] | Y | Y | Y | Y | Y | Y | Y | Y | 8 |

| Al Shahrani et al., 2024 [ref. 30] | Y | Y | Y | Y | N | Y | Y | Y | 7 |

| Abdelnasser et al., 2025 [ref. 32] | Y | Y | Y | Y | N | Y | Y | Y | 7 |

| Almarzouki et al., 2025 [ref. 33] | Y | Y | N | Y | N | Y | Y | Y | 6 |

| Elhassan et al., 2025 [ref. 34] | Y | Y | Y | N | N | Y | Y | Y | 6 |

| ALruwail et al., 2025 [ref. 35] | Y | Y | Y | Y | N | N | Y | N | 5 |

| Alghamdi and Alashban, 2024 [ref. 28] | Y | Y | N | Y | Y | N | Y | N | 5 |

| Fadil and Alahmadi, 2024 [ref. 46] | Y | Y | N | Y | N | N | Y | Y | 5 |

| Syed and Alrawi, 2023 [ref. 40] | Y | Y | N | Y | N | N | Y | Y | 5 |

| Syed et al., 2024 [ref. 41] | Y | Y | Y | Y | N | N | N | Y | 5 |

| Alshanberi et al., 2024 [ref. 45] | Y | Y | Y | N | N | N | Y | N | 4 |

| Alwadaani, 2024 [ref. 47] | Y | Y | Y | N | N | N | Y | N | 4 |

| Alwadaani et al., 2024 [ref. 31] | Y | Y | N | Y | N | N | N | N | 3 |

| Gowdar et al., 2024 [ref. 44] | Y | Y | N | N | N | N | N | N | 2 |

| Bin Dahmash et al., 2020 [ref. 27] | Y | N | N | N | N | N | Y | N | 2 |

Table 6: JBI: Joanna Briggs Institute

| Source | Questions | ||||||||||

| Q1 | Q2 | Q3 | Q4 | Q5 | Q6 | Q7 | Q8 | Q9 | Q10 | Score | |

| Salih, 2024 [ref. 43] | Y | N | Y | N | Y | Y | Y | Y | N | Y | 7 |

| Alqarni et al. 2024 [ref. 29] | Y | N | N | N | Y | Y | Y | N | Y | Y | 6 |

| Alrashed et al., 2024 [ref. 42] | N | N | Y | Y | N | N | N | N | N | Y | 3 |

Study Characteristics

Tables 7–8 summarize the characteristics and the key findings of the analyzed studies. Most studies provided complete data on demographic characteristics (e.g., gender, age, and education level). Geographically, 27.8% (5/18) of the studies were conducted in Riyadh [ref. 39,ref. 27,ref. 34,ref. 40,ref. 41], 16.67% (3/18) in Jeddah [ref. 32,ref. 33,ref. 45], and 11.11% (2/18) in the Eastern Region [ref. 47,ref. 31]. The remaining studies were conducted either across multiple regions [ref. 30,ref. 28,ref. 42, ref. 46] (22.2%, 4/18) or in one of the following cities: Bishah [ref. 29], Aljouf [ref. 35], Alkharj [ref. 44], or Jazan [ref. 43].

Table 7: AI: artificial intelligence

| Study | Year | City | Study type | Method | Study population | Number of participants | Participants characteristics |

| Abdelnasser et al. [ref. 32] | 2025 | Jeddah | Cross-sectional study | Online questionnaire | Medical educators, Makkah region | 220 | 126 (57.27%) females and 94 (42.73%) males. 61 (27.7%) lecturers, 53 (24.1%) assistant professors, 53 (24.1%) demonstrators, 33 (15%) associate professors, and 20 (9.1%) professors |

| Al Shahrani et al. [ref. 30] | 2024 | Multiple regions in Saudi Arabia | Cross-sectional study | Online questionnaire | Medical students | 527 | 300 (52.4%) females and 272 (476%) males. 250 (43.7%) from the western region, 149 (2%) from the central region, 80 (14%) from the eastern region, 56 (9.8%) from the southern region, and 37 (6.5%) from the northern region. The majority of the participants (64.3%) were in clinical years (third to fifth year of the medical program) |

| Alghamdi and Alashban [ref. 28] | 2024 | Multiple regions in Saudi Arabia | Cross-sectional study | Online questionnaire | Freshly graduated medical students | 1,212 | Students were recruited from 32 out of 39 Saudi universities |

| Alharbi et al. [ref. 39] | 2024 | Riyadh | Cross-sectional study | Online questionnaire | Undergraduate healthcare students | 354 | 242 (68.4%) males and 112 (31.6%) females. 39.8% were below 24 years old. 124 (35%) were from the College of Pharmacy, 135 (38.1%) were from the College of Nursing, and 95 (26.8%) were from the College of Emergency Medical Services |

| Almarzouki et al. [ref. 33] | 2025 | Jeddah | Cross-sectional study | Online questionnaire | Undergraduate medical students | 354 | 226 (63.8%) males and 128 (36.2%) females. Mean age: 21.4 years. 60% were 2nd-year medical students |

| Alqarni et al. [ref. 29] | 2024 | Bishah | Descriptive study | Online questionnaire | Undergraduate medical students | 54 | 38 (70.4%) males and 16 (29.6%) females. The majority of the participants had limited or moderate experience with AI tools (66.6%), while 22.2% had never used them, and only 11.1% had extensive experience |

| Alrashed et al. [ref. 42] | 2024 | Multiple regions in Saudi Arabia | Randomized qualitative approach | Semi-structured interviews | Medical students enrolled in the medical education program | 13 | Age between 20 and 24 years |

| ALruwail et al. [ref. 35] | 2025 | Aljouf | Cross-sectional study | Online questionnaire | Health science students | 384 | 220 (57.3%) males and 184 (42.7%) females. 174 (45.3%) under 21 and 210 (54.7%) over 21 years |

| Alshanberi et al. [ref. 45] | 2024 | Jeddah | Cross-sectional study | Online questionnaire | Students and faculty members at a medical college | 128 | 102 (79.9%) females and 26 (20.3%) males. 109 (85.2%) under 25, 10 (7.8%) aged 26-35, and 9 (7%) over 35 years. 116 (90.6%) were students, and 12 (9.4%) were faculty members |

| Alwadaani [ref. 47] | 2024 | Al-Ahsa | Cross-sectional study | Online questionnaire | Undergraduate medical students and medical practitioners | 159 | 66 (41.5%) were males and 93 (58.5%) were females. Mean age of the participants: 28.9 ± 8.8 years. 102 (64.2%) undergraduates, 19 (11.9%) fellowship, 15 (9.4%) membership, 14 (8.8%) MBBS or equivalent, and 6 (3.8%) postgraduates |

| Alwadaani et al. [ref. 31] | 2024 | Eastern Region | Cross-sectional study | Online questionnaire | Undergraduate medical students | 303 | 129 (42.57%) females and 174 (57.43%) males. 93.73% of participants were aged between 21-25 years |

| Bin Dahmash et al. [ref. 27] | 2020 | Riyadh | Cross-sectional study | Online questionnaire | Undergraduate medical students | 476 | 289 (60.5%) males and 187 (39.5%) females |

| Elhassan et al. [ref. 34] | 2025 | Riyadh | Cross-sectional study | Online questionnaire | Undergraduate medical students | 293 | 95 (32.4%) males and 198 (67.6%) females |

| Fadil and Alahmadi [ref. 46] | 2024 | Multiple regions in Saudi Arabia | Cross-sectional study | Online questionnaire | Undergraduate medical students | 463 | 175 (37.8%) males and 288 (62.2%) females. 59.6% were between 23-25 years old. 38.4% were between 26-30 years old. 71.3% were from the central region |

| Gowdar et al. [ref. 44] | 2024 | Alkharj | Cross-sectional study | Online questionnaire | Undergraduate dental students and dental practitioners | 100 | Dental students |

| Salih [ref. 43] | 2024 | Jazan | Qualitative case study | Direct interview. Focus group discussions | Faculty members. Undergraduate medical students | 45 | 11 faculty members (7 males and 4 females; mean age: 48.5 years). 34 students (16 males and 18 females; mean age: 22.6 years) |

| Syed and Alrawi [ref. 40] | 2023 | Riyadh | Cross-sectional study | Online questionnaire | Undergraduate pharmacy students | 157 | 118 (75.2%) males and 39 (24.8%) females. 101 (64.3%) were aged 18-22 years |

| Syed et al. [ref. 41] | 2024 | Riyadh | Cross-sectional study | Online questionnaire | Researchers and academics | 201 | 140 (69.7%) males and 61 (30.3%) females. 54.2 were 31-35 years old. 43.8% were researchers |

Table 8: AI: artificial intelligence

| Author | Year | Main research objectives | Key findings |

| Abdelnasser et al. [ref. 32] | 2025 | To assess the familiarity level of medical educators with AI and robotics in medical education and healthcare systems. To explore medical educators’ beliefs, perspectives, and expectations regarding the present and future integration of AI and robotics in diverse medical disciplines. To explore the legal liability issues that could arise from the use of AI and robotics in medical education and healthcare systems | Female participants were more familiar with AI, with 63.9% compared to 36.1% of males. They also had a more positive attitude towards integrating AI into medical practice and education. Overall, 70.5% of all participants, regardless of gender, supported adding AI to the medical school curriculum |

| Al Shahrani et al. [ref. 30] | 2024 | To evaluate the preparedness of medical students in Saudi Arabia regarding AI technologies and their applications. To assess the current state of AI education in Saudi medical colleges and AI use and future perspectives for medical students | Only 14.5% of participants received AI education as part of their curriculum. 34.4% gained AI education through extracurricular activities, mainly self-study. 93.2% had used AI applications before. AI was mostly used for querying medical knowledge, conducting research, and explaining pathologies. 46.5% said AI use had not influenced their specialty choices. Most participants could not define or explain basic AI concepts. Female medical students had higher AI readiness scores. 50% felt confident in assessing information from AI tools |

| Alghamdi and Alashban [ref. 28] | 2024 | To assess the attitudes and perceptions of freshly graduated Saudi Arabian medical students towards AI utilization in the medical sciences. To evaluate the students’ comprehension of AI principles and the extent to which AI is incorporated into their medical education | 83.3% believed AI would play an important role in healthcare. 26% understood the basic computational principles of AI. 56% understood the limitations of AI tools. 69.5% thought all medical students should receive AI training. 8.6% had received AI training |

| Alharbi et al. [ref. 39] | 2024 | To assess the attitudes, opinions, and perceptions towards ChatGPT among healthcare students | 91.2% of participants were familiar with the term "ChatGPT," and 75.1% of them felt comfortable using it in their academic activities. 27.9% found ChatGPT useful for gathering medical information. 87.4% believed ChatGPT had a positive impact on medical education. 60.2% were concerned that ChatGPT may facilitate cheating and plagiarism in academic settings |

| Almarzouki et al. [ref. 33] | 2025 | To evaluate medical students’ current AI knowledge, exposure, and information sources. To evaluate medical students’ understanding of AI role in the medical field | 77.1% of participants reported that they had never taken a course in AI. GPA was not associated with AI use. 78.2% of the participants reported that they knew about AI tools from public media. 18.4% of participants reported that they understood the fundamental basics of AI. 20.1% of the participants reported that their schools offered adequate resources to explore AI applications in medicine |

| Alqarni et al. [ref. 29] | 2024 | To evaluate Saudi students’ perceptions of the effectiveness, reliability, ease of use, preference, and frequency of ChatGPT integration as a tool for English-medium instruction (EMI). To assess Saudi ESP students’ perspectives on the use of ChatGPT for learning medical terminology | Participants reported that ChatGPT was effective in helping them understand medical terminology. Participants reported that ChatGPT offered a user-friendly interface that facilitated medical terminology learning |

| Alrashed et al. [ref. 42] | 2024 | To identify challenges and opportunities associated with the integration of virtual reality (VR), AI, and telemedicine into the Saudi medical curriculum and healthcare system | Participants had mixed opinions on VR, AI, and telemedicine, with some expressing excitement and others showing less interest. Medical students and residents had high expectations for integrating these technologies into education and practice. However, concerns were raised about the preparedness of students, educators, and healthcare professionals to adapt. Many advocated for incorporating VR, AI, and telemedicine modules into the medical curriculum |

| ALruwail et al. [ref. 35] | 2025 | To assess knowledge, attitude, practice, and related factors toward AI among healthcare science students in northern Saudi Arabia. To examine correlations between knowledge, attitude, and practice | 343 (89.3%) of the participants reported that they had not participated in AI courses. Participants showed low knowledge, attitude, and practices about AI applications in medical education. Female participants showed a higher level of knowledge about AI practices |

| Alshanberi et al. [ref. 45] | 2024 | To analyze the level of AI awareness among medical students | 57% learned about AI applications from social media, 7.8% were unaware of AI applications, and the rest gained knowledge from other sources. 77% of participants reported that they were aware of the AI applications in the medical field |

| Alwadaani [ref. 47] | 2024 | To determine the readiness of medical professionals to implement AI by assessing the knowledge, perceptions, and practice of AI among medical students and doctors | Most of the participants reported that they had not received AI training (86.18%). Participants who received prior AI training showed higher knowledge of AI medical applications than those who had not. Gender had no significant effect on AI knowledge. 56% of postgraduate participants and 85.3% of undergraduate participants reported never applying AI in medical practice. 81.13% of participants reported that AI should be included in the medical curriculum |

| Alwadaani et al. [ref. 31] | 2024 | To explore undergraduate medical students’ views on AI, assess their understanding of AI, and the level of confidence in using basic AI tools in the future | 61.72% of participants stated that AI would play an important role in the future. 37.95% of participants stated that they understood the basic computational principles of AI. 59.07% of participants stated that medical students should receive AI training. 15.51% of participants stated that they would be confident in using AI tools in their future career |

| Bin Dahmash et al. [ref. 27] | 2020 | To assess medical students’ perception of AI and the impact of these perceptions on their choice regarding radiology as a career | 50% of participants stated they had a good understanding of AI. However, when assessed, only 25% of those who claimed to have good AI knowledge answered the questions correctly |

| Elhassan et al. [ref. 34] | 2025 | To examine the familiarity, usage patterns, and attitudes of Alfaisal University medical students toward ChatGPT and other chat-based AI apps in medical education | 97.9% of male participants and 90.0% of female participants reported being familiar with AI applications. 46.3% of males and 30.3% of females stated they used ChatGPT and similar AI tools to answer medical questions. 41.1% of males and 31.3% of females reported using AI applications to explain concepts. 58.4% of participants believed AI applications could enhance medical education. 77.1% of participants thought AI applications might encourage academic dishonesty. 70.3% of participants understand that AI-generated content could sometimes be inaccurate. 46.4% of participants considered using AI tools for coursework completion unethical |

| Fadil and Alahmadi [ref. 46] | 2024 | To evaluate the perceptions, awareness, and opinions of healthcare students towards AI in Saudi Arabia | 86.7% of participants stated they had not received any formal AI training. 84.9% expressed concerns about potentially losing their jobs to AI in the future. 40.6% believed AI devalued the medical professions. 70.8% thought AI could help facilitate patient education. 77.5% agreed that AI knowledge and skills should be included in the academic curriculum |

| Gowdar et al. [ref. 44] | 2024 | To assess the awareness and attitudes of dental students and dental practitioners in Alkharj toward AI | 74% of undergraduate dental students reported not being aware of the working principles of AI. 50% of undergraduate dental students were unaware that AI tools could enhance knowledge on a topic. 95% of undergraduate dental students believed AI training should be included in the medical school curriculum |

| Salih [ref. 43] | 2024 | To explore faculty and students’ perspectives on AI, their use of AI applications, and their perspective on its value and impact on medical education | 81.8% of faculty members and 85.3% of students stated that they had an AI application installed on their mobile phones/ tablets. Both faculty members and students believed AI would have a positive impact on medical education. However, they expressed concerns that AI tools could provide incorrect information, offer vague references, and pose a threat to academic integrity. Faculty members suggested that course descriptions should be modified to accommodate the use of AI tools and address related concerns |

| Syed and Alrawi [ref. 40] | 2023 | To determine awareness, perceptions, and opinions toward artificial intelligence among undergraduate pharmacy students | 73.9% of participants reported that they knew about AI tools. 69.4% of participants reported that AI tools could help healthcare professionals. 24.8% of participants stated that they could lose their jobs in the future because of AI tools. 10.2% of participants stated that they had received formal training on AI tools. 10.8% of participants stated that AI tools could facilitate patient education |

| Syed et al. [ref. 41] | 2024 | To assess the awareness and perceptions towards ChatGPT among academicians and researchers in Saudi Arabia | 91% of participants reported being familiar with the term ChatGPT. 68.7% of participants expressed a positive attitude towards ChatGPT. 77.1% of participants reported feeling somewhat comfortable using ChatGPT in their health practice, while 15.9% said they were very comfortable. 20.9% of participants had used ChatGPT in their research. 80.1% of participants had asked ChatGPT a question. 85.6% of participants believed that ChatGPT had a positive impact on education |

Regarding study population, most of the analyzed studies focused on undergraduate medical students, including those enrolled in colleges of medicine, pharmacy, dentistry, and applied medical sciences [ref. 27,ref. 28,ref. 29,ref. 30,ref. 31,ref. 33,ref. 34,ref. 35,ref. 39,ref. 40,ref. 42,ref. 46]. Two studies exclusively included medical academics and researchers [ref. 32,ref. 41]. Four studies included both students and medical practitioners or researchers [ref. 43,ref. 44,ref. 45,ref. 47].

The earliest study identified in this review was published in 2020 [ref. 27]. All studies reported the total number of participants, which ranged from 13 to 1212 individuals. The participants’ years of study varied from pre-clinical (years 1, 2, and 3) to clinical years (years 4, 5, and 6) of medical colleges. Their age ranged from 18 to 25 years old. In six studies that included medical academics and professionals, the average age was between 38 and 48 years. The total number of participants of all analyzed studies was 5404 subjects. All analyzed studies provided the gender ratio of participants, with an average of 43.9 ± 4.3% females and 56.07 ± 4.3% males. Research on AI and medical education within Saudi Arabia predominantly employed cross-sectional, questionnaire-based designs (15 out of 18 studies), with a combined sample size of 5,331 subjects. The remaining three studies utilized qualitative descriptive methods [ref. 29,ref. 42,ref. 43], incorporating direct interviews, focus group discussions, and online questionnaires, with a total sample size of 103 subjects.

Medical Students’ and Academics’ Attitudes Towards AI

In 17 out of 18 analyzed studies, attitudes toward AI tools were measured using various methods: a five-point Likert scale [ref. 30,ref. 31,ref. 33,ref. 35,ref. 39,ref. 28], a three-point Likert scale [ref. 32,ref. 34,ref. 40,ref. 41,ref. 44], direct interviews and discussion groups [ref. 42,ref. 43], yes/no questions [ref. 45,ref. 47], mixed approach using six-point Likert scale and direct interviews [ref. 29], or seven-point Likert scale [ref. 27]. One study did not measure participants’ attitudes towards AI [ref. 36].

Cross-sectional studies indicated that both medical students and academics generally have a positive attitude towards integrating AI in medical education, with female participants showing greater enthusiasm compared to their male counterparts. Most studies highlighted that participants believe AI could enhance medical education and improve learning outcomes [ref. 27,ref. 29,ref. 30,ref. 32,ref. 33,ref. 34,ref. 35,ref. 39,ref. 41,ref. 42,ref. 43,ref. 44,ref. 45,ref. 46,ref. 47]. Similarly, qualitative studies involving focus group discussions and direct interviews reported that AI tools would be beneficial to medical education [ref. 29,ref. 42,ref. 43].

Despite these positive findings, some studies raised concerns about the ethical challenges associated with using AI tools in medical education. Participants in these studies expressed concerns about risks to academic integrity, the potential for cheating, and the fear that AI could undermine the value of medical professions [ref. 29,ref. 32,ref. 39,ref. 46].

Medical Students’ and Academics’ Knowledge About AI

Most participants across the analyzed studies reported using AI applications for educational purposes [ref. 27,ref. 28,ref. 29,ref. 30,ref. 34,ref. 35,ref. 39,ref. 41,ref. 42,ref. 43,ref. 44,ref. 45]. However, only a small number had received formal training in AI tools. Their knowledge about AI tools primarily came from extracurricular resources, such as social media and friends. Many studies highlighted gaps in participants’ understanding of basic AI concepts and how AI tools work [ref. 27,ref. 28,ref. 34,ref. 42,ref. 44].

Additionally, many studies reported that participants had a good understanding of AI tools’ limitations, including the potential to generate false information, violate ethical principles, and lack credibility [ref. 29,ref. 39,ref. 43,ref. 44,ref. 45]. While half of the participants agreed that AI should be integrated into medical education, they also stressed the importance of prioritizing human judgment over AI-generated recommendations. This highlights a key point: students view AI as a valuable tool, but they do not fully trust it [ref. 39]. Confidence in using AI is another issue. Although students frequently turn to AI for help, only a minority feel proficient with these tools [ref. 28,ref. 34,ref. 38,ref. 40]. This raises an important issue: despite frequent exposure to AI, many students do not fully understand how it works, how reliable it is, or how to critically evaluate its outputs. Many studies suggest that integrating AI education into medical curricula could help address this issue [ref. 27,ref. 31,ref. 31,ref. 32,ref. 33,ref. 34,ref. 43,ref. 44].

Regarding why medical students use AI tools, most studies reported that they use these tools to look up medical information, answer clinical questions, support research, and better understand complex medical concepts [ref. 29,ref. 30,ref. 35,ref. 39,ref. 44].

Discussion

This review provides an in-depth analysis of the current literature on the attitudes, knowledge, and practices of medical students and professionals in Saudi Arabia toward integrating AI tools into medical education. Our findings show a generally positive attitude toward AI integration in medical education, yet they also highlight knowledge gaps and concerns about ethical and practical implications.

Interpretation of Key Findings

A key finding from this review is that most of the analyzed studies employed a cross-sectional design, which restricts their ability to identify long-term trends or causal relationships. Nevertheless, the findings consistently indicate that medical students and professionals recognize the advantages of using AI tools in medical education. They stated that AI tools have the potential to enhance the learning experience, improve clinical decision-making, and research capabilities. However, many participants acknowledged the limitations of these tools and emphasized the necessity of human judgment in medical decision-making. Although students often used AI tools for learning, formal training in AI was uncommon. Most students learned about AI tools through social media, peer discussions, or self-study. This points to a significant gap in the medical curriculum that needs to be addressed. Incorporating AI education into the medical curriculum could enable students to gain a better understanding of how these tools function, their benefits, and their limitations. It would also cover crucial topics such as ethics, privacy, data security, and the potential for bias in AI algorithms. This will not only improve the students’ learning experience but also lead to better patient care and treatment outcomes.

Methodological Considerations and Quality of Analyzed Studies

The quality assessment of the studies analyzed revealed several methodological limitations. A recurrent issue was the insufficient control of confounding factors, such as prior exposure to AI technologies, institutional resource disparities, or baseline differences in digital literacy among participants. Many studies relied on self-reported metrics or ad hoc questionnaires lacking psychometric validation. This introduces risks of bias and reduced reliability. Moreover, ambiguities in defining study populations, such as heterogeneous cohorts (e.g., medical students, residents, and practicing clinicians grouped without stratification), compromised the interpretations of outcomes and limited the applicability of findings to specific educational or professional contexts. Additional issues included the poorly designed data collection tools that were either unvalidated or contained multiple questions without clear objectives.

Additionally, there is a lack of mixed-methods research that combines qualitative data with real-world insights from interviews or focus groups. Without this, several key factors, such as how institutions, teaching methods, and faculty attitudes influence AI adoption, will remain unexplored.

The quality of the studies analyzed in this review raises significant concerns, as only 3 out of 18 were published in high-impact (Q1) journals, according to Scopus or Web of Science [ref. 34,ref. 38,ref. 39]. This limited representation in top-tier journals raises questions about the overall rigor and reliability of the research in this field.

AI in education is still an emerging field, with much of the research in its initial phases, which may explain the limited representation in top-tier journals. The research, therefore, might not meet the rigorous standards of high-impact journals, possibly due to insufficient methodologies or sample sizes, raising concerns about the overall reliability and generalizability of the findings.

Additionally, some studies are based on small sample sizes, which reduces their statistical power and limits the ability to generalize findings to larger populations. This also means that some studies may lack the statistical power to detect meaningful effects, even when they are present. This may indicate a need for more rigorous research methodologies and improved study designs. Future research should prioritize hypothesis-driven, intervention-based studies that rigorously evaluate both the cognitive and practical outcomes of AI integration in medical curricula.

Ethical and Practical Challenges

This review highlighted several ethical concerns about using AI in medical education. Participants often expressed worries about maintaining academic integrity, the risk of AI-assisted cheating, and the potential devaluation of medical expertise. These concerns underscore the necessity for regulatory guidelines and ethical frameworks to ensure responsible AI use in medical education. Institutions should establish clear policies on the acceptable use of AI tools in coursework, assessments, and clinical decision-making to address these concerns.

Additionally, there is a clear need to improve students’ confidence in using AI tools. Many participants expressed concerns about their ability to critically assess AI-generated information. This lack of confidence suggests that AI literacy should be integrated into medical training.

Implications for Medical Education

Our findings highlight the importance of integrating AI education into medical curricula. Given that most students acquire AI-related knowledge through informal means, structured AI training programs should be developed to provide foundational knowledge on AI applications, limitations, and ethical considerations.

Additionally, interdisciplinary collaboration between medical educators, AI experts, and policymakers is essential to develop standardized AI curricula that align with global best practices.

Comparison to Global Findings

The findings of this review indicate that medical students generally have a positive attitude toward integrating AI tools into their education. Several survey-based studies have highlighted a diverse range of both positive [ref. 48,ref. 49,ref. 50,ref. 51,ref. 52,ref. 53,ref. 54,ref. 55,ref. 56,ref. 57,ref. 58] and negative [ref. 59,ref. 60] attitudes toward AI, reflecting the varied perspectives that healthcare students have about AI technology. Baigi and colleagues (2023) conducted a systematic analysis of 38 studies about the attitudes, knowledge, and skills of medical students towards AI in both high-income countries as well as low- and middle-income countries. They reported that most negative attitudes were found in high-income countries, while positive attitudes dominated in low- and middle-income countries [ref. 61]. Nevertheless, an online survey questionnaire, designed for distribution to medical physics professionals and students in both developed and developing countries, reported that 85% of the participants agreed that AI would play a prominent role in the practice of medical physicists. Notably, the majority of those who agreed were from developed countries [ref. 53]. These findings suggest that both medical students and educators, whether in developed or developing countries, should receive AI training.

In addition, our findings revealed that the majority of medical students in Saudi Arabia generally had a high level of AI knowledge. However, some medical students reported significant gaps in their understanding of AI technology, which is in line with the findings of Baigi and colleagues (2023). These gaps may be attributed to the lack of structured AI training in their medical programs. To address this, integrating AI-focused courses and practical training into the medical curriculum could substantially improve their understanding of AI in the medical field. There are also ongoing concerns about ethics, data privacy, and the reliability of AI-driven decisions [ref. 61].

Future Research Directions

This systematic review identifies several important areas for future research and progress in medical education in Saudi Arabia. A major limitation identified in the existing literature on AI integration in medical education is the lack of studies that involve direct AI interventions. Therefore, future research should investigate the effectiveness of AI applications in improving learning outcomes, enhancing clinical skills, and evaluating their overall impact on medical education. Additionally, there is a need for in-depth studies on the long-term effects of AI, focusing on both the broader scope of medical education and specific learning outcomes. Moreover, there is a growing need for additional research in AI to address the potential risks associated with its integration into the medical education curriculum. As AI technologies and tools become more widespread, issues related to automation bias, over-reliance on technology, data privacy, over- and under-skilling, and the potential for intensifying existing disparities in education should be extensively studied.

Furthermore, AI-based educational studies conducted in Saudi Arabia should adopt well-established guidelines for assessing research quality to ensure robust and reliable data and outcomes. Finally, the integration of AI with interdisciplinary collaboration, merging expertise from various specialties, including healthcare, data science, education, and ethics. This multidisciplinary approach is essential to establish frameworks and evidence-based guidelines for integrating AI into medical curricula.

Limitations

This review has two main limitations. First, many of the included studies were cross-sectional in design and relied on self-reported data, which may introduce response bias and limit the ability to draw causal conclusions. In addition, the diversity in study designs and the tools used to measure outcomes made it difficult to compare findings across studies and prevented a meaningful meta-analysis. Secondly, given the rapid pace at which artificial intelligence is evolving, some of the findings discussed in this review may become outdated soon. Nevertheless, this review offers a useful reference point for future research, particularly in tracking how views on using AI tools in medical education may change over time.

Conclusions

This systematic review demonstrates that while medical students and professionals in Saudi Arabia generally hold positive views on integrating AI tools into medical education, notable knowledge gaps and ethical concerns still exist. Our findings emphasize the need for more structured AI training within medical programs, clear ethical guidelines for using AI, and further research into the long-term effects of AI on medical training and practice. Addressing these issues is crucial to ensuring that AI becomes a valuable tool in medical education, enhancing learning experiences while upholding academic and professional integrity.

References

- JM Górriz, J Ramírez, A Ortíz. Artificial intelligence within the interplay between natural and artificial computation: advances in data science, trends and applications. Neurocomputing, 2020

- M Bond, H Khosravi, Laat De. A meta-systematic review of artificial intelligence in higher education: a call for increased ethics, collaboration, and rigour. Int J Educ Technol High Educ, 2024

- FK Fadlelmula, SM Qadhi. A systematic review of research on artificial intelligence in higher education: practice, gaps, and future directions in the GCC. J Univ Teach Learn Pract, 2024

- K Zhang, AB Aslan. AI technologies for education: recent research & future directions. Comput Educ AI, 2021

- H Crompton, D Burke. Artificial intelligence in higher education: the state of the field. Int J Educ Technol High Educ, 2023

- TKF Chiu, Q Xia, X Zhou, CS Chai, M Cheng. Systematic literature review on opportunities, challenges, and future research recommendations of artificial intelligence in education. Comput Educ AI, 2023

- S Iqbal, S Ahmad, K Akkour. Review article: impact of artificial intelligence in medical education. MedEdPublish, 2021

- Boninger Boninger, F. F., Molnar A., C and. Boninger F, Molnar A, Saldaña C: big claims, little evidence, lots of money: the reality behind the summit learning program and the push to adopt digital personalized learning platforms. Commercialism in Education Research Unit, 2025

- B Williamson, R Eynon. Historical threads, missing links, and future directions in AI in education. Learn Media Technol, 2020

- TK Chiu. A holistic approach to the design of artificial intelligence (AI) curriculum for K-12 schools. TechTrends, 2021

- TK Chiu, H Meng, CS Chai, I King, SW Wong, Y Yam. Creation and evaluation of a pretertiary artificial intelligence (AI) curriculum. IEEE Trans Educ, 2021

- Q Xia, TK Chiu, M Lee, IT Sanusi, Y Dai, CS Chai. A self-determination theory (SDT) design approach for inclusive and diverse artificial intelligence (AI) education. Comput Educ, 2022

- SAM Aldosari. The future of higher education in the light of artificial intelligence transformations. Int J Higher Educ, 2020

- PK Kuhl. Developing Minds in the Digital Age: Towards a Science of Learning for 21st Century Education. 2019

- F Miao, W Holmes, R Huang, H Zhang. AI and education: A guidance for policymakers. 2021

- C Cath. Governing artificial intelligence: ethical, legal and technical opportunities and challenges. Philos Trans A Math Phys Eng Sci, 2018

- AA Hussin. Education 4.0 made simple: ideas for teaching. Int J Educ Lit Stud, 2018

- J Car, A Sheikh, P Wicks, MS Williams. Beyond the hype of big data and artificial intelligence: building foundations for knowledge and wisdom. BMC Med, 2019. [PubMed]

- M Gordon, M Daniel, A Ajiboye. A scoping review of artificial intelligence in medical education: BEME Guide No. 84. Med Teach, 2024. [PubMed]

- J Lee, AS Wu, D Li, KM Kulasegaram. Artificial intelligence in undergraduate medical education: a scoping review. Acad Med, 2021

- C Preiksaitis, C Rose. Opportunities, challenges, and future directions of generative artificial intelligence in medical education: scoping review. JMIR Med Educ, 2023

- A Bozkurt, A Karadeniz, D Baneres, AE Guerrero-Roldán, ME Rodríguez. Artificial intelligence and reflections from educational landscape: a review of AI studies in half a century. Sustainability, 2021

- O Karaca, SA Çalışkan, K Demir. Medical artificial intelligence readiness scale for medical students (MAIRS-MS) – development, validity and reliability study. BMC Med Educ, 2021. [PubMed]

- V González-Calatayud, P Prendes Espinosa, R Roig-Vila. Artificial intelligence for student assessment: a systematic review. Appl Sci, 2021

- A Nigam, R Pasricha, T Singh, P Churi. A systematic review on AI-based proctoring systems: Past, present and future. Educ Inf Technol (Dordr), 2021. [PubMed]

- HMM Al-Zyoud. The role of artificial intelligence in teacher professional development. Univers J Educ Res, 2020

- A Bin Dahmash, M Alabdulkareem, A Alfutais. Artificial intelligence in radiology: does it impact medical students preference for radiology as their future career?. BJR Open, 2020. [PubMed]

- SA Alghamdi, Y Alashban. Medical science students’ attitudes and perceptions of artificial intelligence in healthcare: a national study conducted in Saudi Arabia. J Radiat Res Appl Sci, 2024

- O Alqarni, S Curle, HS Mahdi, JK Mohammed Ali. Medical students’ perceptions of ChatGPT integration in English medium instruction: a study from Saudi Arabia. Forum Linguist Stud, 2024

- A Al Shahrani, N Alhumaidan, Z AlHindawi, A Althobaiti, K Aloufi, R Almughamisi, A Aldalbahi. Readiness to embrace artificial intelligence among medical students in Saudi Arabia: a national survey. Healthcare (Basel), 2024

- FA Alwadani, A Lone, MT Hakami. Attitude and understanding of artificial intelligence among Saudi medical students: an online cross-sectional study. J Multidiscip Healthc, 2024. [PubMed]

- A Abdelnasser, W Aleqbali, YAB Jarfan, R Al Ansari, J Nayem, LS Alhazmi, NM Abd El-Fadeal. Medical educators’ perspective regarding the integration of artificial intelligence and robotics in undergraduate curricula and healthcare systems, Makkah Province, Saudi Arabia. Ann For Res, 2025

- AF Almarzouki, A Alem, F Shrourou. Assessing the disconnect between student interest and education in artificial intelligence in medicine in Saudi Arabia. BMC Med Educ, 2025. [PubMed]

- SE Elhassan, MR Sajid, AM Syed, SA Fathima, BS Khan, H Tamim. Assessing familiarity, usage patterns, and attitudes of medical students toward ChatGPT and other chat-based AI apps in medical education: cross-sectional questionnaire study. JMIR Med Educ, 2025

- BF ALruwail, AM Alshalan, A Thirunavukkarasu, A Alibrahim, AM Alenezi, TZ Aldhuwayhi. Evaluation of health science students’ knowledge, attitudes, and practices toward artificial intelligence in northern Saudi Arabia: implications for curriculum refinement and healthcare delivery. J Multidiscip Healthc, 2025. [PubMed]

- A Liberati, DG Altman, J Tetzlaff. The PRISMA statement for reporting systematic reviews and meta-analyses of studies that evaluate healthcare interventions: explanation and elaboration. BMJ, 2009

- Insitute Insitute, J.B J.B. Checklist for qualitative research. The Joanna Briggs Institute critical appraisal tools for use in JBI systematic reviews. 2025

- Lockwood Lockwood, Craig Craig, Munn Zachary, Porritt and Kylie. Checklist for analytical cross-sectional studies. JBI Evidence Implementation, 2025

- MK Alharbi, W Syed, A Innab, M Basil A Al-Rawi, A Alsadoun, A Bashatah. Healthcare students attitudes opinions perceptions and perceived obstacles regarding ChatGPT in Saudi Arabia: a survey‑based cross‑sectional study. Sci Rep, 2024. [PubMed]

- W Syed, M Basil A Al-Rawi. Assessment of awareness, perceptions, and opinions towards artificial intelligence among healthcare students in Riyadh, Saudi Arabia. Medicina (Kaunas), 2023

- W Syed, A Bashatah, K Alharbi, SS Bakarman, S Asiri, N Alqahtani. Awareness and perceptions of ChatGPT among academics and research professionals in Riyadh, Saudi Arabia: implications for responsible AI use. Med Sci Monit, 2024

- FA Alrashed, T Ahmad, MM Almurdi, AA Alderaa, SA Alhammad, M Serajuddin, AM Alsubiheen. Incorporating technology adoption in medical education: a qualitative study of medical students’ perspectives. Adv Med Educ Pract, 2024. [PubMed]

- SM Salih. Perceptions of faculty and students about use of artificial intelligence in medical education: a qualitative study. Cureus, 2024

- IM Gowdar, AA Alateeq, AM Alnawfal, AF Alharbi, AM Alhabshan, SM Aldawsari, NA AlHarbi. Artificial intelligence and its awareness and utilization among dental students and private dental practitioners at Alkharj, Saudi Arabia. J Pharm Bioallied Sci, 2024

- AM Alshanberi, AH Mousa, SA Hashim. Knowledge and perception of artificial intelligence among faculty members and students at Batterjee Medical College. J Pharm Bioallied Sci, 2024

- H Fadil, Y Alahmadi. Evaluation of awareness, perceptions and opinions of artificial intelligence (AI) among healthcare students-A cross-sectional study in Saudi Arabia. Trop J Pharm Res, 2024

- HA Alwadaani. Knowledge, perceptions, and practice of artificial intelligence among medical students and doctors at King Faisal University, Saudi Arabia. Majmaah J Heal Sci, 2024

- T Boillat, FA Nawaz, H Rivas. Readiness to embrace artificial intelligence among medical doctors and students: questionnaire-based study. JMIR Med Educ, 2022

- D Pinto Dos Santos, D Giese, S Brodehl. Medical students’ attitude towards artificial intelligence: a multicentre survey. Eur Radiol, 2019. [PubMed]

- M Banerjee, D Chiew, KT Patel. The impact of artificial intelligence on clinical education: perceptions of postgraduate trainee doctors in London (UK) and recommendations for trainers. BMC Med Educ, 2021. [PubMed]

- H Ejaz, H McGrath, BL Wong, A Guise, T Vercauteren, J Shapey. Artificial intelligence and medical education: A global mixed-methods study of medical students’ perspectives. Digit Health, 2022. [PubMed]

- E Jussupow, K Spohrer, A Heinzl. Identity threats as a reason for resistance to artificial intelligence: survey study with medical students and professionals. JMIR Form Res, 2022

- JC Santos, JH Wong, V Pallath, KH Ng. The perceptions of medical physicists towards relevance and impact of artificial intelligence. Phys Eng Sci Med, 2021. [PubMed]

- M Teng, R Singla, O Yau. Health care students’ perspectives on artificial intelligence: countrywide survey in Canada. JMIR Med Educ, 2022

- EA Wood, BL Ange, DD Miller. Are we ready to integrate artificial intelligence literacy into medical school curriculum: students and faculty survey. J Med Educ Curric Dev, 2021

- E Yüzbaşıoğlu. Attitudes and perceptions of dental students towards artificial intelligence. J Dent Educ, 2021. [PubMed]

- CJ Park, PH Yi, EL Siegel. Medical student perspectives on the impact of artificial intelligence on the practice of medicine. Curr Probl Diagn Radiol, 2021. [PubMed]

- AQ Tran, LH Nguyen, HS Nguyen. Determinants of intention to use artificial intelligence-based diagnosis support system among prospective physicians. Front Public Health, 2021. [PubMed]

- S Bisdas, CC Topriceanu, Z Zakrzewska. Artificial intelligence in medicine: a multinational multi-center survey on the medical and dental students’ perception. Front Public Health, 2021. [PubMed]

- C Blease, A Kharko, M Bernstein. Machine learning in medical education: a survey of the experiences and opinions of medical students in Ireland. BMJ Health Care Inform, 2022

- SF Mousavi Baigi, M Sarbaz, K Ghaddaripouri, M Ghaddaripouri, AS Mousavi, K Kimiafar. Attitudes, knowledge, and skills towards artificial intelligence among healthcare students: a systematic review. Health Sci Rep, 2023